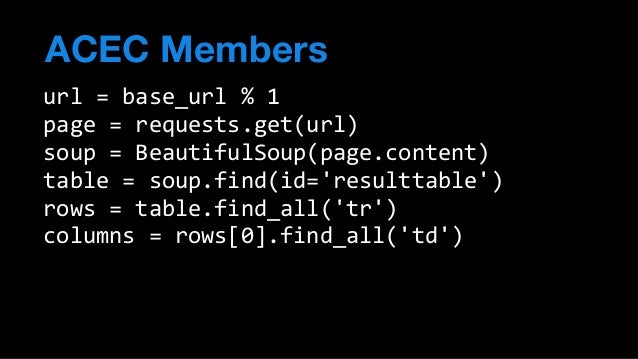

Beautiful Soup is a Python library for getting data out of HTML, XML, and other markup languages. It is a data parser. Beautiful Soup helps you pull particular content from a webpage, remove the HTML markup, and save the information. It is a tool for web scraping that helps you clean up and parse the documents you have pulled down from the web. Python Twitter Tools The Minimalist Twitter API for Python is a Python API for Twitter, everyone's favorite Web 2.0 Facebook-style status updater for people on the go. Also included is a Twitter command-line tool for getting your friends' tweets and setting your own tweet from the safety and security of your favorite shell and an IRC bot that.

‘Web scraping’ is a logic to get web page data as HTML format. With this information, not only could get text/image data inside of target page, we could also find out which tag has been used and which link is been included in. When you need lots of data for research in your system, this is one of the common way to get data.

Use parser

But not like CSV or Excel sheet, raw HTML is pretty rough and disordered data.

This is the case of getting raw HTML data from Bleacher Report. It will return data like this.

It is inconvenient for workers to find target data from here. You need a process of organizing before going further. Maybe you could make a parser yourself, but that’s not an effective way. Python have some great modules for this, and I will use one of this named BeautifulSoap.

Before going on, install it via python package manager with pip install beautifulsoup4.

With this code, raw HTML data has been converted to beautifulsoap object. Now you can get data with text or tag info.

Why it needs to use browser

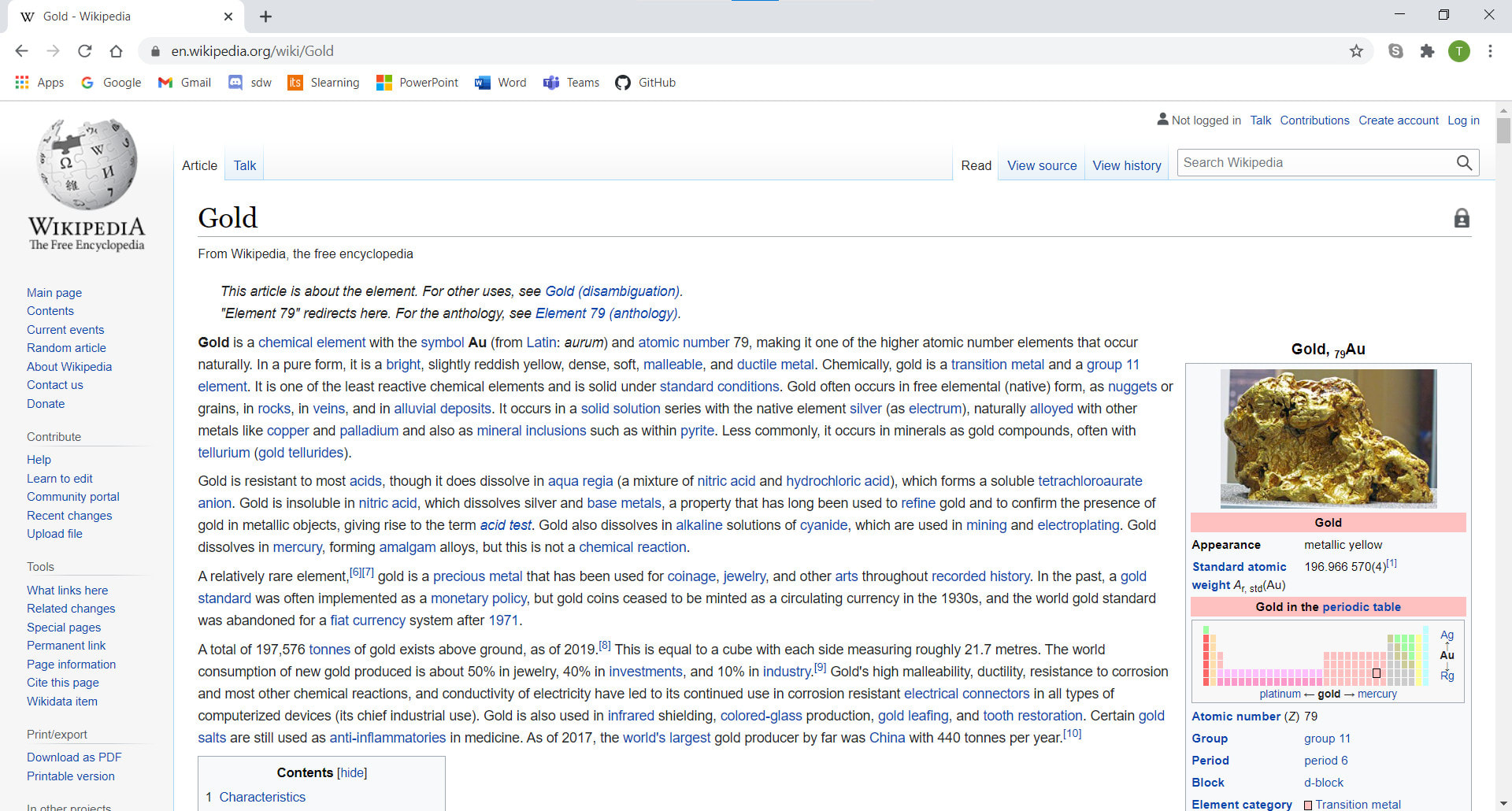

There are a problem on process above, not in parser, but in HTML request process. If we just request data by http request method, it cannot get dynamically rendered part because they are not in HTML file before loading process. Here is some example for this case.

This is the main page of Samsung SDS official site.

This is the ordinary form of main page. Now let’s check how this will be look like after disabling javascript.

Menu in top has been disappeared, because they are being rendered dynamically from javascript controller. And also, menu texts will not being scraped when you get this HTML page with http request process. So, to get all of these data, you need to get fully rendered result data. For getting it, it has to be rendered in some kind of ‘fake browser’, and that’s why we will use ‘headless browser’.

This is description of headless browser in wikipedia.

Scraping via headless browser

Python Web Scraper Github Download

To make scraper via headless browser, we need headless browser, and module to make this run in virtual. I will use PhantomJS here for headless browser, and will make it run with Selenium. You need to install these first.

PhantomJS can be installed by downloading from main page, but can be installed with brew or npm. You can use one of 2 command below.

Try make a class for scraper.

HBScraper class is the class for getting ‘loaded’ page data. It initiates browser setting like max loading time, window size, etc. on __init__ method. You can do scraping and get scraped result from browser with scrape_page.

Use it like below.

Because it needs time for loading, scraping with headless browser needs more time to get result than just getting data with HTTP request. But to get exact data of target page, you will need to consider of using it.

Web Scraping Python Code

Python Web Scraper Github Pdf

Reference